Petit test d’installation de Ollama en version LXC via un script :

bash -c "$(wget -qLO - https://github.com/tteck/Proxmox/raw/main/ct/ollama.sh)"

On va voir le résultat … actuellement m’a carte NVIDIA (ou Bios) de supporte pas le Proxmox Passthrough.

root@balkany:~# dmesg | grep -e DMAR -e IOMMU | grep "enable" [ 0.333769] DMAR: IOMMU enabled root@balkany:~# dmesg | grep 'remapping' [ 0.821036] DMAR-IR: Enabled IRQ remapping in xapic mode [ 0.821038] x2apic: IRQ remapping doesn't support X2APIC mode # lspci -nn | grep 'NVIDIA' 0a:00.0 VGA compatible controller [0300]: NVIDIA Corporation GF100GL [Quadro 4000] [10de:06dd] (rev a3) 0a:00.1 Audio device [0403]: NVIDIA Corporation GF100 High Definition Audio Controller [10de:0be5] (rev a1) # cat /etc/default/grub | grep "GRUB_CMDLINE_LINUX_DEFAULT" GRUB_CMDLINE_LINUX_DEFAULT="quiet intel_iommu=on iommu=pt video=vesafb:off video=efifb:off initcall_blacklist=sysfb_init # efibootmgr -v EFI variables are not supported on this system. # cat /etc/modules vfio vfio_iommu_type1 vfio_pci vfio_virqfd # cat /etc/modprobe.d/pve-blacklist.conf | grep nvidia blacklist nvidiafb blacklist nvidia

J’ai donc ajouter ceci :

# cat /etc/modprobe.d/iommu_unsafe_interrupts.conf options vfio_iommu_type1 allow_unsafe_interrupts=1

J’ai bien un seul groupe iommugroup pour la carte NVIDIA :

Quand je lance le script cela termine par une erreur :

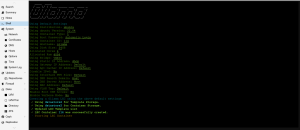

____ ____ / __ \/ / /___ _____ ___ ____ _ / / / / / / __ `/ __ `__ \/ __ `/ / /_/ / / / /_/ / / / / / / /_/ / \____/_/_/\__,_/_/ /_/ /_/\__,_/ Using Default Settings Using Distribution: ubuntu Using ubuntu Version: 22.04 Using Container Type: 1 Using Root Password: Automatic Login Using Container ID: 114 Using Hostname: ollama Using Disk Size: 24GB Allocated Cores 4 Allocated Ram 4096 Using Bridge: vmbr0 Using Static IP Address: dhcp Using Gateway IP Address: Default Using Apt-Cacher IP Address: Default Disable IPv6: No Using Interface MTU Size: Default Using DNS Search Domain: Host Using DNS Server Address: Host Using MAC Address: Default Using VLAN Tag: Default Enable Root SSH Access: No Enable Verbose Mode: No Creating a Ollama LXC using the above default settings ✓ Using datastore2 for Template Storage. ✓ Using datastore2 for Container Storage. ✓ Updated LXC Template List ✓ LXC Container 114 was successfully created. ✓ Started LXC Container bash: warning: setlocale: LC_ALL: cannot change locale (en_US.UTF-8) //bin/bash: warning: setlocale: LC_ALL: cannot change locale (en_US.UTF-8) ✓ Set up Container OS ✓ Network Connected: 192.168.1.45 ✓ IPv4 Internet Connected ✗ IPv6 Internet Not Connected ✓ DNS Resolved github.com to 140.82.121.3 ✓ Updated Container OS ✓ Installed Dependencies ✓ Installed Golang ✓ Set up Intel® Repositories ✓ Set Up Hardware Acceleration ✓ Installed Intel® oneAPI Base Toolkit / Installing Ollama (Patience) [ERROR] in line 23: exit code 0: while executing command "$@" > /dev/null 2>&1 The silent function has suppressed the error, run the script with verbose mode enabled, which will provide more detailed output.

Misère.